“In the rapidly evolving field of technology, artificial intelligence (AI) is a disruptive force that has not only transformed industries, but has also raised many questions and legal challenges.”

Chat GPT asked to present artificial intelligence in the context of legal challenges.

Is there a definition of artificial intelligence?

Currently, there is no legal definition of artificial intelligence either in Poland or in the European Union. A similar situation also exists in other major jurisdictions around the world. Probably the closest definition to AI is the definition of ‘automated decision-making’ in the RODO, which may include some AI systems.

The RODO, in Article 22, defines automated decision-making as:

“… a decision which is based solely on automated processing, including profiling, and which produces legal effects in relation to (…) a person or significantly affects that person in a similar manner.”.

However, this definition in its current form is not specific enough to sufficiently ‘cover’ the concept of artificial intelligence systems as we know them today.

From a legal point of view, artificial intelligence is therefore ‘just’ a technology or a set of technologies and is regulated in the same way as any other technology – through a number of different rules applicable to specific contexts or applications. It can be used for good purposes or to cause harm, its use can be legal or illegal – it all depends on the situation and the context.

Why is the regulation of artificial intelligence so important?

The pace of artificial intelligence development is accelerating. And because artificial intelligence is a ‘disruptive force’, different countries are struggling to describe the technology for legislative purposes. In the past, legislators rarely considered creating new legislation at an international level specifically for a single technology. However, recent years have proven that more and more technological breakthroughs require a rapid legal response – you don’t have to look far, just think of cloud computing, blockchain and now artificial intelligence.

For example, different parts or components of this technology may be owned by different people or companies (for example, copyright of a certain programme code or ownership of databases), but the idea of artificial intelligence is public. And as more and more AI tools and knowledge are made available to everyone, in theory anyone can use AI tools or create new tools. This may involve potential abuse, which is why regulation of the technology is so important.

Why else? Everyone agrees that artificial intelligence has the potential to change the economic and social landscape around the world. Of course, this is already happening, and the process is accelerating every day – which is as exciting as it is frightening. The speed at which new technologies are developing makes it difficult to predict the results. It is therefore crucial to have some legal principles in place to ensure that artificial intelligence is used in a way that benefits everyone. And since it is a ‘global phenomenon’, it would be best if there was at least a universal agreement on what artificial intelligence is from a legal point of view.

However, this is unlikely to happen globally. Some countries are trying to define artificial intelligence by its purpose or functions, others by the technologies used, and some are combining different approaches. However, many key jurisdictions are trying to agree on a definition of AI and find common principles. This is important to avoid practical problems, especially for providers of global AI solutions, as they will soon face numerous compliance issues. Only at least basic interoperability between jurisdictions will allow AI to reach its full potential.

EU approach

Various countries in the European Union have tried to ‘approach’ the AI issue in many ways. However, if we are looking for a quick answer to the question “what is the most likely definition of AI in the EU?”, most will refer us to the Artificial Intelligence Act, or AI Act, or rather its draft. Member states are deferring concrete decisions until the final version of the AI Act, which will comprehensively regulate the technology at the European level in all member states, is adopted.

The current publicly available version of the AI Act contains the following definition of an artificial intelligence system:

“An AI system is a machine-based system designed to operate with varying levels of autonomy and that may exhibit adaptiveness after deployment and that, for explicit or implicit objectives, infers, from the input it receives, how to generate outputs such as predictions, content, recommendations, or decisions that can influence physical or virtual environments.”

Source: https://www.linkedin.com/feed/update/urn:li:activity:7155091883872964608/

Which can be translated as: “An artificial intelligence system is a machine system designed to operate with varying levels of autonomy, which can exhibit adaptability when deployed and which, for explicit or implicit purposes or hidden purposes, infers from the input it receives how to generate outputs such as predictions, content, recommendations, or decisions that can influence physical or virtual environments.”

This is in contrast to the last text of the AI Act of 2023, which defined an artificial intelligence system as “software developed using one or more of the techniques and approaches listed in Annex I that can, for a given set of human-defined purposes, generate outputs such as content, predictions, recommendations or decisions that affect the environments with which it interacts.”

The EU has thus moved closer in its definition of an artificial intelligence system to the OECD standard.

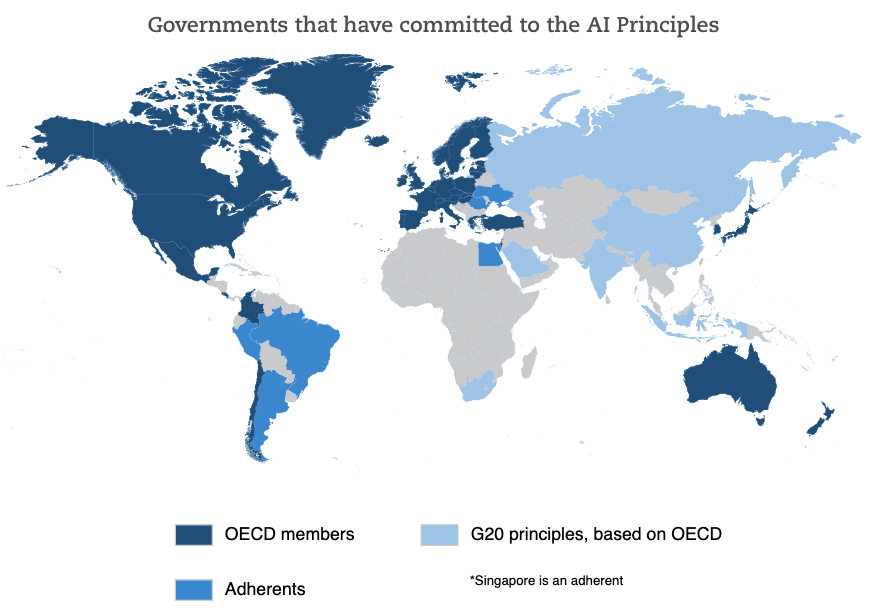

And what is this standard? In November 2023. The OECD (Organisation for Economic Co-operation and Development) updated the definition of AI contained in the OECD AI Principles. This is the first intergovernmental standard on AI (it was adopted in 2019). Numerous authorities around the world have committed to applying this definition directly or with minor modifications. The European Union is also part of this group.

Source: https://oralytics.com/2022/03/14/oced-framework-for-classifying-of-ai-systems/

OECD definition of an AI System:

“An AI system is a machine-based system that , for explicit or implicit objectives, infers, from the input it receives, how to generate outputs such as predictions, content, recommendations, or decisions that can influence physical or virtual environments. Different AI systems vary in their levels of autonomy and adaptiveness after deployment.”

(PL: “An artificial intelligence system is a machine-based system that, for explicit or implicit purposes, infers from the input received how to generate outputs such as predictions, content, recommendations, or decisions that can influence physical or virtual environments. Different artificial intelligence systems vary in their level of autonomy and adaptability once deployed”).

Current OECD artificial intelligence system model

In addition to this definition, the OECD recommendations set out five additional value-based principles for the responsible management of trustworthy artificial intelligence.

These include:

inclusive growth, sustainability and prosperity;

human-centred values and justice;

transparency and ‘explainability’;

robustness, safety and security;

accountability.

In the context of the above, countries that have committed to the OECD Principles on Artificial Intelligence should reflect the aspects listed (at least in theory). In this context, the EU is on the right track.

How is artificial intelligence interpreted at a global level?

United States

Obviously, one of the most active jurisdictions when it comes to artificial intelligence is the United States.According to the National Conference of State Legislatures website, at least 25 states, Puerto Rico and the District of Columbia have introduced legislation on artificial intelligence in 2023, with 15 states and Puerto Rico passing resolutions in this area. Individual states have taken more than 120 initiatives in relation to general AI issues (legislation on specific AI technologies, such as facial recognition or autonomous cars, is monitored separately).

The approach in the United States thus varies. As an interesting aside, in May 2023, a bill was introduced in California calling on the US government to impose an immediate moratorium on the training of artificial intelligence systems more powerful than GPT-4 for at least six months to allow time for the development of an AI management system – its status is currently ‘pending’, but it does not seem likely to be adopted.

Regarding the definition of artificial intelligence, there is no uniform legal definition in the US. However, one of the key pieces of AI-related legislation – the National AI Initiative Act of 2020. – established the National Artificial Intelligence Initiative Office and defined artificial intelligence as “a machine-based system that can, for a given set of human-defined goals, make predictions, recommendations or decisions affecting real or virtual environments”. It goes on to explain that “artificial intelligence systems use machine- and human-based inputs to – (A) perceive real and virtual environments; (B) abstract such perceptions into models through analysis in an automated fashion; and (C) use model inference to formulate options for information or action”. However, the document mainly focuses on the organisation of the AI Office to support the development of this technology in the United States, rather than regulating artificial intelligence itself.

The US has committed to the OECD’s principles on artificial intelligence. However, there is also other guidance on what to expect from federal AI regulations. “The Blueprint for an AI Bill of Rights: Making Automated Systems Work for the American People” is the place to start. It was published by the White House Office of Science and Technology Policy in October 2022 and contains a list of five principles to “help provide guidance whenever automated systems may significantly affect the rights, opportunities or access to critical needs of the public”. These principles include:

1. secure and efficient systems

2. protection against algorithmic discrimination

3. data privacy

4. notification and explanation

5. human alternatives, considerations and fallback solutions

The definition of artificial intelligence systems covered by Blueprint includes two elements: (i) it has the potential to significantly affect the rights, capabilities or access of individuals or communities and (ii) it is an “automated system”. An automated system is further defined as “any system, software or process that uses computing as all or part of a system to determine outcomes, make or support decisions, inform policy implementation, collect data or observations, or otherwise interact with individuals and/or communities. Automated systems include, but are not limited to, systems derived from machine learning, statistics or other data processing techniques or artificial intelligence and exclude passive computing infrastructure.” To clarify, “passive computing infrastructure is any intermediary technology that does not influence or determine the outcome of a decision, make or assist in making a decision, inform the implementation of a policy or collect data or observations”, including, for example, web hosting.

In terms of other key jurisdictions, none of the following have any widely recognised legal definition, but:

China

China has defined standards at the national level and local adaptations that are based on certain definitions related to the functionality of artificial intelligence systems;

Hong Kong

has created guidelines for the ethical development and use of artificial intelligence, which define artificial intelligence as “a family of technologies that involve the use of computer programmes and machines to mimic the problem-solving and decision-making abilities of humans”.

Japan

Japan has set out an ‘AI Strategy 2022’. It has been issued by the Cabinet Office’s Integrated Innovation Strategy Promotion Council. It suggests that ‘AI’ refers to a system capable of performing functions deemed intelligent.

Singapore

Singapore, on the other hand, has attempted to define ‘AI’ as a set of technologies that are designed to simulate human characteristics such as knowledge, reasoning, problem solving, perception, learning and planning and, depending on the AI model, produce a result or decision (such as a prediction, recommendation and/or classification). This definition is provided in the Model Framework for the Management of Artificial Intelligence issued by the Infocomm Media Development Authority and the Personal Data Protection Commission.

***

Attempts to create a legal definition of artificial intelligence are ongoing around the world. Currently, one of the most recent proposals is that proposed by the OECD. The enactment of the AI Act in its final version will certainly accelerate the process of unifying the approach to the definition of AI worldwide. The question remains open as to whether some countries will not, however, want to ‘distinguish’ themselves with a strongly liberal approach to AI in order to attract the creators of this technology to themselves (without particularly caring about the legal and ethical aspects).

Authors: Mateusz Borkiewicz, Agata Jałowiecka, Grzegorz Leśniewski